AI Compute Service

Accelerate AI

with Powerful

Cloud Computing

Powerful, scalable AI computing with end-to-end cloud support for open-source models.

Key Highlights

Proven Expertise & Capabilities

Vast Computing Power

Instant access to immense computing power; trillion-parameter model training.

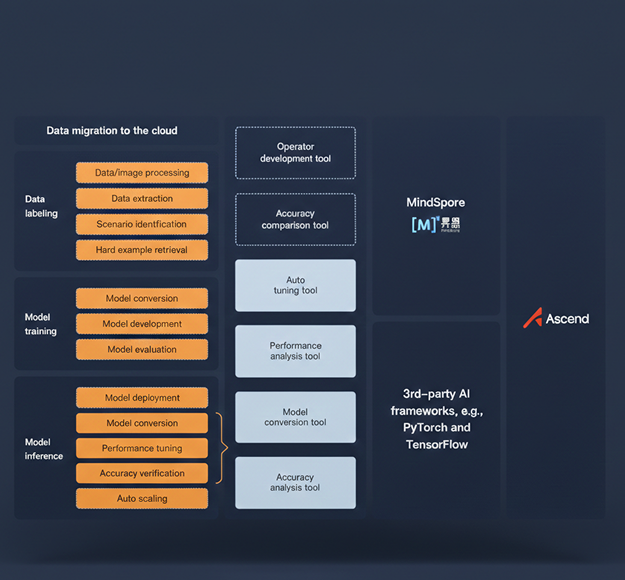

Complete Toolchains

E2E, cloud-based toolchains; configuration-free and available out-of-the-box; self-service migration for mainstream scenarios.

Efficient Long-Term Training

30+ days of uninterrupted training on 1,000+ cards; training tasks auto-recovered in less than 30 minutes.

Full-Stack Ecosystem

Adapted to support major open-source models; 100,000+ assets available in AI Gallery.

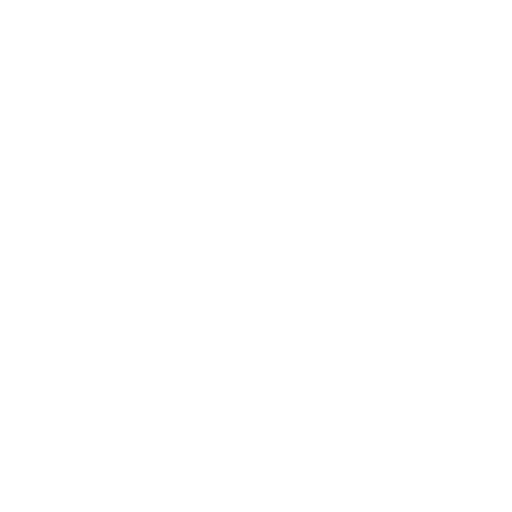

Architecture

AI Compute Service

Architecture

Why Us

Why

AI Compute

Service?

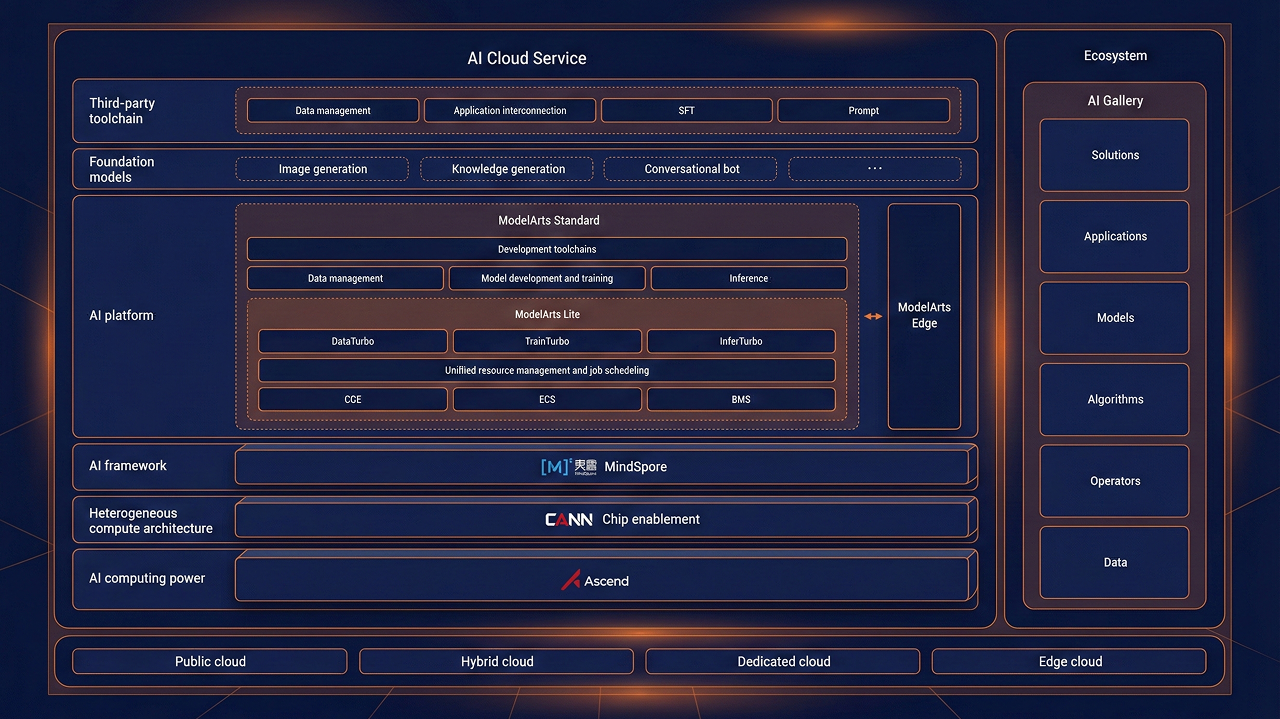

- Using Da Vinci architecture, Ascend offers 30% more cost-effective AI computing power than competitors.

- MindSpore accelerates the tuning of a 100-billion parameter model by 60%.

- For typical scenarios, the E2E migration toolchain can migrate an AI application to a production environment in less than two weeks.

- Easy-to-use training and inference migration tools enable self-service migration.

- Unified resource scheduling and optimized allocation policies achieve 90% overall resource utilization.

- Elastic scheduling, along with converged resource scheduling for training and inference, ensures resource provisioning in less than 30 minutes.

- AI Gallery aggregates more than 100,000 domain-specific AI assets.

- Major open-source models are supported and are specially adapted to work smoothly with Ascend AI.

Application Scenarios

Comprehensive Solutions

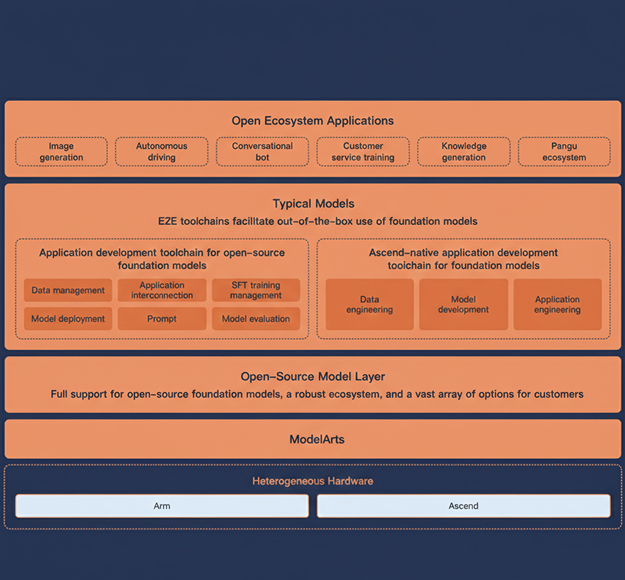

Full Support for Third-Party Open-Source Foundation Models, Fast Service Rollout for Customers

- E2E Toolchain for Out-Of-The-Box Foundation Models

End-to-end cloud toolchains and Ascend-native AI agents accelerate foundation model deployment with zero migration, fully supporting mainstream AI frameworks.

- Ascend-compatible Open-Source Foundation Models

Major open-source foundation models are optimized for Ascend architecture, delivering superior accuracy and performance. With full access to source code, container images, and performance metrics, migration is accelerated from months to days.

- Ascend Toolchains for All Major Open-Source Foundation Models

Both the Ascend toolchain and native toolchain are available for open-source foundation models, facilitating model tuning and toolchain integration. This allows you to choose the tools that best suit your needs.

Ascend Chips Significantly Improve AIGC Model Performance

- Model Conversion

Models can be converted to a MindSpore-compatible format in just a few minutes.

- Auto Tuning

Graph optimization is fully automated. During this process, model tuning policies are automatically generated, verified on NPUs based on feedback, and continuously optimized in an iterative manner.

- Performance and Accuracy Verification

Tools are used to quantitatively analyze the performance and accuracy of models after they are migrated to Ascend, ensuring zero losses in performance or accuracy.

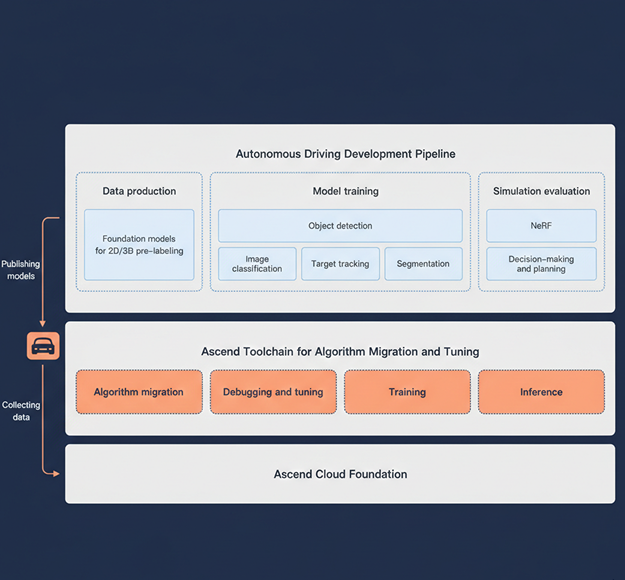

Efficient Training of Autonomous Driving Models on PB-Scale Data, Faster Innovation and Iteration

- In-Depth Optimization of Sensing and Simulation Algorithms

Algorithms along the entire chain of autonomous driving, particularly those related to sensing, route planning, control, simulation, and generation, have been optimized extensively, leading to a substantial enhancement in performance.

- Large-Scale Distributed Training

Supports distributed training on petabytes of data.

- Compute Upgrade

Instant access to immense Ascend AI computing power accelerates algorithm development and iteration.

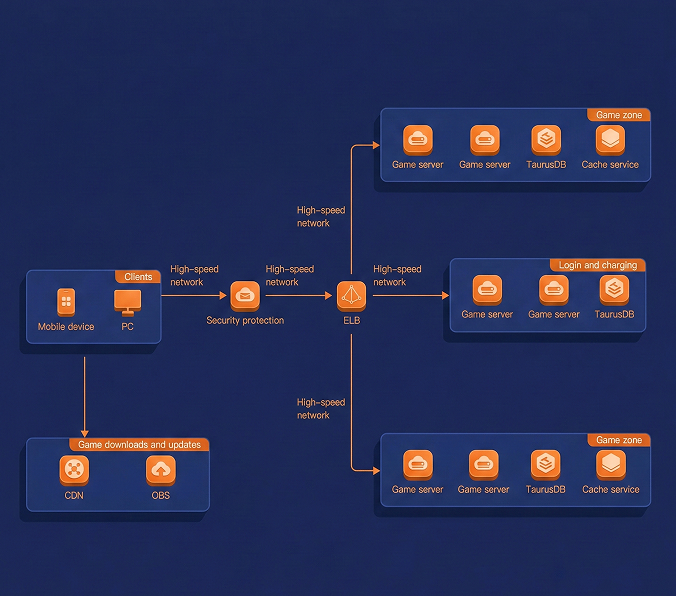

Ascend AI Cloud Service for Content Moderation

Ascend AI Cloud Service offers robust, proven solutions for content moderation and fast migration (of CV workloads) to Ascend, accommodating the compute and business continuity needs of content platforms and service providers.

- Fast Migration Evaluation

The Ascend migration toolchain can quickly analyze model operators, diagnose model accuracy, test and optimize model performance, and convert model formats.

- Cost-Effectiveness

Ascend chips boost the performance of content moderation models, providing higher performance than that of competitors.