ModelArts

ModelArts

ModelArts is a one-stop AI platform that empowers developers and data scientists to rapidly build and deploy models, accelerating intelligent industry upgrades.

Key Highlights

Proven Expertise & Capabilities

E2E Model Development Pipeline

End-to-end tool chain boosts development efficiency by 50% and fosters collaboration in DataOps, MLOps, and DevOps.

Ultra Large-Scale Training

A single job can train a model with a trillion parameters and process hundreds of petabytes of data.

Cost-effective AI Compute

Diverse compute with various specifications powers large-scale distributed training and inference acceleration.

High Reliability

Training jobs are automatically recovered from faults, ensuring a job failure rate of less than 0.5%. Training involving 10,000 cards can run uninterrupted for 30 days.

Why Us

Why Huawei

Cloud ModelArts?

- Support the management of 10,000-node compute clusters.

- Large-scale distributed training accelerates foundation model development.

- ExeML automates model design, parameter tuning and training, and model compression and deployment based on labeled data.

- ExeML can be used to create image classification, object detection, and sound classification models, meeting the demands of various fields.

- E2E AI development is managed in ModelArts Studio, boosting efficiency while maintaining records of the entire AI development process.

- Local IDE and ModelArts plug-ins are provided for seamless on-premises and in-cloud AI development with customizable running environments.

- Supports multiple deployment types, including real-time inference, batch inference, and edge inference.

- Supports multiple deployment types, including real-time inference, batch inference, and edge inference.

Application Scenarios

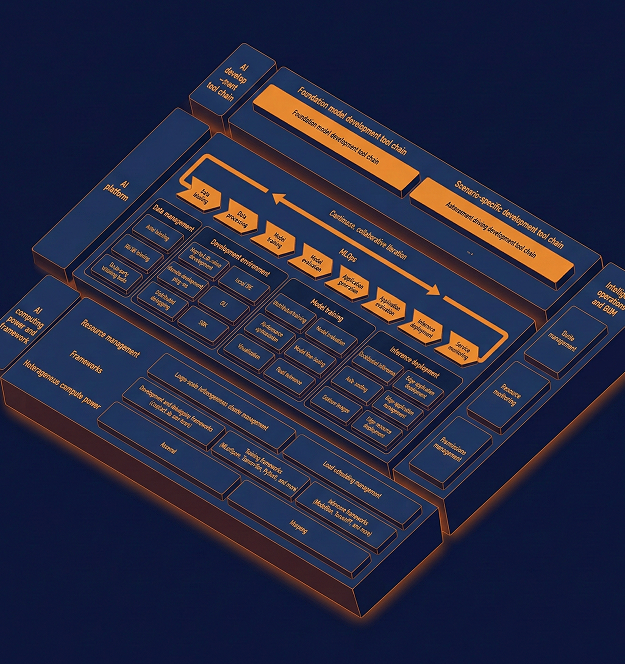

Architecture Overview :

A Deep Dive Into ModelArts

ModelArts

A full-stack, full-lifecycle model development tool chain provides comprehensive AI tools and services to enable rapid service innovation.

- Efficient Development

An E2E model development pipeline to efficiently develop, debug, and optimize foundation model applications and scenario-specific applications E2E monitoring tools for intelligent operations and O&M

MLOps-based AI model iteration to continuously and efficiently improve accuracy Data-AI convergence, streamlining the E2E process of data services and AI development

- Efficient Running

AI acceleration suites for data, training, and inference acceleration, as well as distributed efficient training and inference Cost-effective Ascend computing power Large-scale heterogeneous clusters and scheduling management

- Efficient Migration

E2E cloud-based Ascend migration tool chain to support full-stack AI services Professional migration service